AI Qualitative Analysis

March 2026

About the author: Kim Desjarlais is an Evaluation Assistant with Three Hive Consulting, with a background in administrative and managerial roles across nonprofit and community organizations. She brings experience managing data, overseeing enriched programs, and supporting operational functions, with strengths in program evaluation, staff coordination, and system management to enhance organizational effectiveness.

This article is rated as:

AI is becoming a part of every field, including evaluation. Like many in the workplace, I’ve been experimenting with AI and related applications in my work following our AI guidelines. This has made me realize the importance of having clear and well-defined guidelines for AI use, which should cover:

the specific AI tools you use and their purposes,

strategies to protect privacy and confidentiality,

process for informing clients about AI usage and practices,

steps to obtain consent for collecting data with AI, and

ways to verify the accuracy of the AI-generated results.

I have used several AI tools in my work. For instance, I’ve used Copilot (Microsoft’s AI tool) to help with large volume document reviews, literature reviews, environmental scans, and larger surveys with many qualitative questions. I’ve used CoLoop (a purpose-built AI qualitative analysis) to transcribe and help analyze interview and focus group data. I’ve also tried ResearchRabbit (an AI‑powered literature discovery and research exploration tool) for a literature review, however my content had few published articles, so that attempt wasn’t as successful.

In this article, I’ll outline some of what I’ve learned, including what AI does well, its key limitations, some practical tips for its use, and why human judgment is still essential.

What AI does well to support qualitative analysis

Transcription

AI transcription isn’t perfect, but it is quicker than doing it yourself. It is also quicker and cheaper than most transcription services. You will still need to read through and review the output, but overall, it’s definitely still a time saver.

I have learned that not all AI models are good at transcribing, so you will need to test some out to see which model works for you. I have found that CoLoop was better than Copilot; I had to complete less editing with CoLoop. However, when I’ve had projects with some words in other languages (e.g., Michif or common Indigenous terms), I needed to manually update and change some words; however, it doesn’t matter who or what service is transcribing these terms, it was common with any transcription service, even when I provided those terms to the software in advance.

Scanning documents for specific content

When given good prompts, AI can scan through large documents quickly, pulling out key details. This can save you hours of reading through many long documents. The key to being successful in your AI document review is your prompts. See tips below for some guidance on creating successful prompts!

Scanning web content

When looking for resources online, don’t just use traditional search engines like Google; try using an AI search or research agent as well. Using these tools can sometimes help you find other sources that you may miss. This approach can be applied to both literature reviews and environmental scans. See tips below for guidance on choosing the right agent.

For more information on how to use AI for an environmental scan, check out our article Using AI to do an environmental scan.

Creating quick themes

AI is good at providing quick analysis of the most dominant themes. If I need some quick insights for a sensemaking session, this might be a starting point for that discussion. Watch out for major risks listed below!

This is also true for large surveys with many qualitative responses. AI can quickly group these responses, giving you some quick insights into the common themes.

AI qualitative analysis limitations

AI can and does hallucinate

Sometimes, when no answer aligns with your prompt, the AI may generate one anyway, assuming missing data wasn’t acceptable. Hallucinations can be completely random and nonsensical (e.g., at Three Hive, we once had a response about tweeting about video games to a prompt about analyzing a survey), but hallucinations can also seem very realistic and plausible. It’s always wise to do your due diligence and have confidence in your reporting.

AI doesn’t understand nuance or sarcasm

Nuance or sarcasm are completely beyond most AI models. For example, if I have a survey response that says, “These services should be accessible everywhere but hospitals.” AI would pick up on the word hospital, and there’s a good chance it would theme the services being talked about to hospitals, even though this speaker clearly intended to dissociate these services from hospitals.

AI also struggles to interpret sarcasm. For example, a speaker may say “so of course, everything went perfectly” sarcastically to illustrate how a project felt chaotic, but most AI models would take this sentence literally.

AI prioritizes dominant themes only

This is where I think AI falls short in qualitative analysis. AI is all about what responses occur the most. In my experience, most AI models do a good job of identifying major themes, but are inconsistent at identifying less frequent responses, some of which would be huge losses for your analysis and reporting.

For example, when interviewing people about their experience accessing a program, one person reported significant barriers accessing the service due to the building’s lack of an accessible entrance. AI may completely miss this finding if it was only mentioned by one of the many participants. AI wouldn’t have considered this response significant enough to include.

Practical tips for using AI in qualitative analysis

Confidentiality

Be sure you know what the AI model you’re using does with the data. Is your data helping to train the model, and to what extent? Where does the AI store data that it processes? How long is this data stored? Are there particular risks to the participants involved in your evaluation that should be considered? Before putting any data into an AI, be sure you know what happens to the data and what implications it may have for your participants.

Transparency and consent

Discuss any potential AI usage with the client or data owner before using AI in a project, including what AI models you will use and for what purposes. Discussing AI use ahead of time prevents unexpected issues. Explain how you’ll maintain confidentiality when using the AI tools and talk about both the benefits and limitations that AI brings to the project. If a client prefers their data not be accessed by AI, implement appropriate safeguards to accommodate their needs.

Use the right agent

Most AI models now have agents that are built to perform different types of work. There are customer service agents, research agents, and analyst agents (just to name a few). If there are specific models built for specific tasks, your output may be higher quality and/or you may need less instruction, rather than trying to use a generic AI model for all tasks

Your response is only as good as your prompt

A well-crafted prompt can be the difference between a sloppy, imprecise response and a good one. What makes this even trickier is that AI platforms are constantly updating, so your prompts may need constant updating and revision too! However, when setting up to do some larger-scale analysis, you may want to keep prompts consistent for that project, so you get a more consistent response.

Another important consideration is to instruct the AI to only review the provided documents and not to access other sources, as it will web search for further resources without this type of rail guard in place.

Provide the AI context about what you’re looking for, including necessary background information, and instruct it not to hallucinate. If you think it did hallucinate, ask it.

Occasionally, the AI may not fully read an entire document, stopping partway through. In such cases, I simply indicate that the review appears incomplete, after which the AI usually then finishes the task. I noted this more with large-scale survey responses. Knowing how many responses there were does help flag this issue if it arises.

Be sure to audit its responses

Be sure you can audit the responses the AI gives you. Given how AI can hallucinate and doesn’t understand intent, you need to be able to double-check what it gives you.

When I’ve gotten an AI to theme a survey, I’ll ask it to provide all the responses for that category so I can audit its work. I’ve learned you need to clearly state that you want to audit the AI’s work for it to consistently include this information.

In the case of a document review, I’ve asked for page numbers and relevant quotes to be able to audit those responses.

Qualitative analysis still needs a human touch

Even when using AI or software to support qualitative analysis, human oversight is essential to ensure nuanced insights are not overlooked. What may be less prevalent in the data can still be important. AI can’t make this judgment call. Even with AI analysis tools, you still need to review all responses, as the AI may miss something important to your evaluation. Relying solely on AI risks missing critical findings.

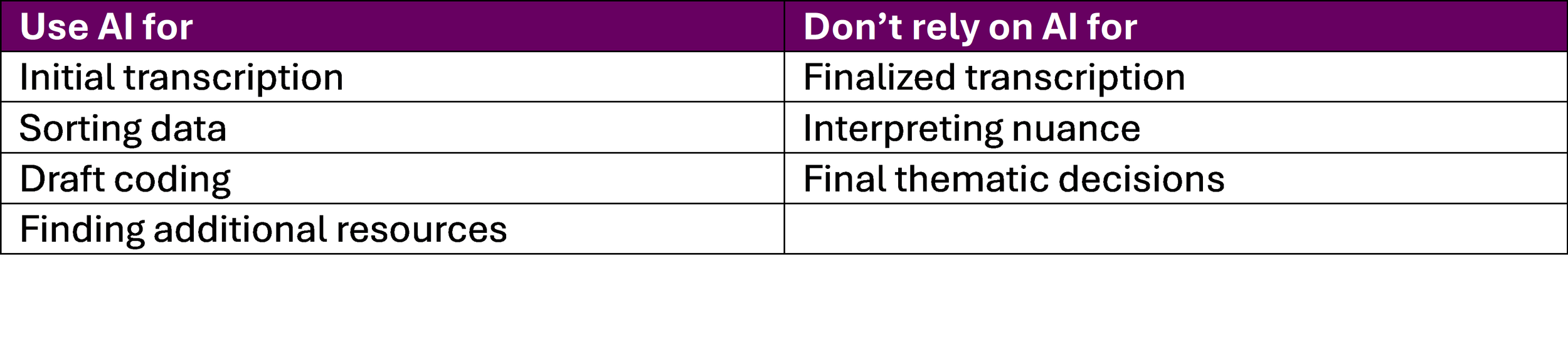

To summarize when to use AI and when not to, this chart below may help.